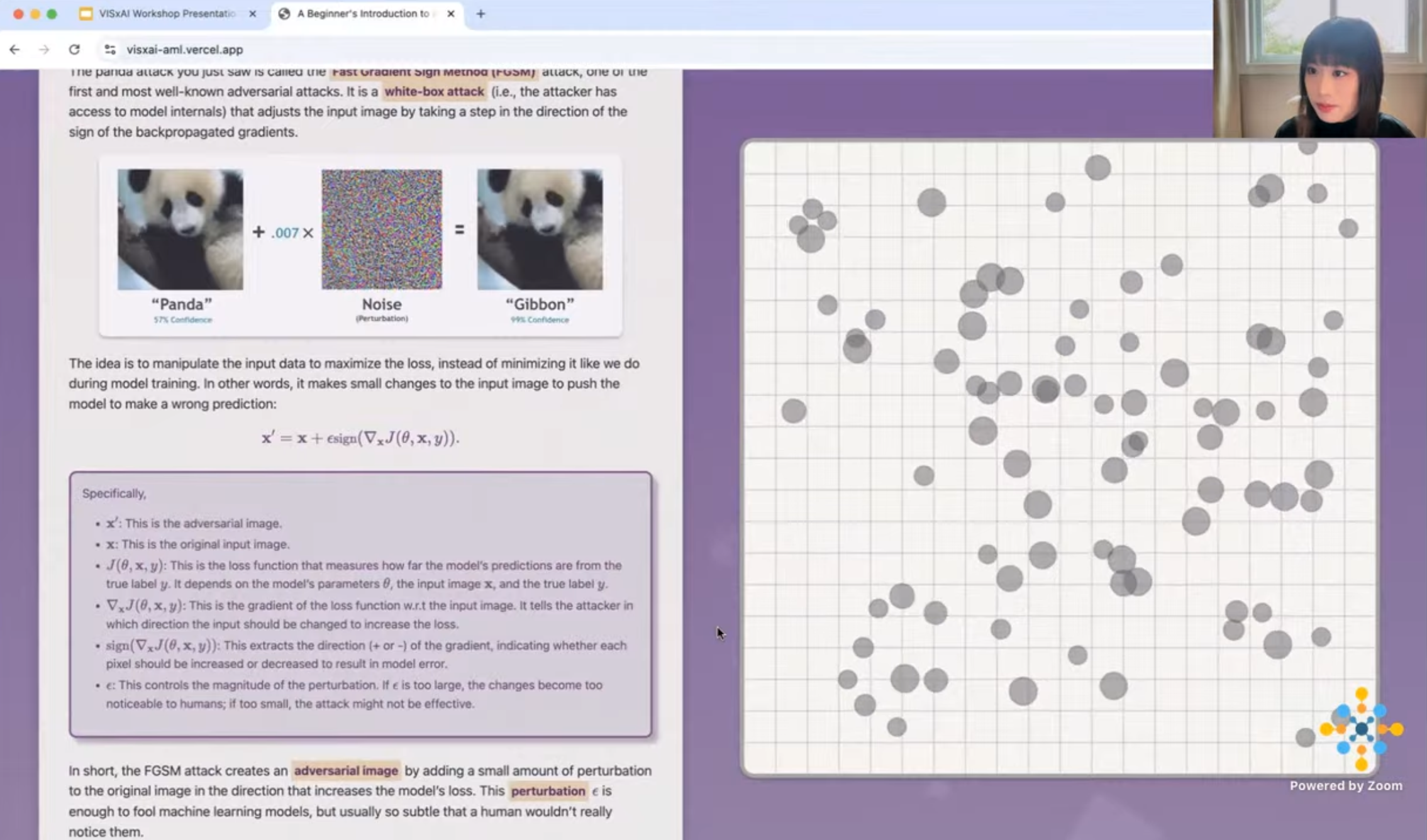

Think you can spot the difference? The AI can't. Panda or Gibbon? A Beginner's Introduction to Adversarial Attacks is an interactive, beginner-friendly visualization that introduces how machine-learning models can be fooled by malicious adversarial attacks. Built primarily with D3.js and Idyll, my guide focuses on the Fast Gradient Sign Method (FGSM) and shows how tiny, human-imperceptible tweaks to an image can push a ResNet-34 model into making confident mistakes. You can compare clean and subtly perturbed images, explore how these attacks shift model behavior, and examine two versions of ResNet-34, one trained normally and one trained with adversarial methods, to see how they respond differently.

- Accepted and presented at the 7th VISxAI Workshop at IEEE VIS24: VISxAI Workshop Program Info

- Explains adversarial attacks using beginner-friendly interactive visualizations.

- Explores the FGSM attack's impact on ResNet-34 models, with insights into both natural and adversarial images, as well as standard and adversarial trainings.

- Includes embedding-level and instance-level analysis to show how adversarial perturbations affect models.

Presentation at the VISxAI Workshop

Video of my presentation at the 7th VISxAI workshop (starts from 1:30:44)

Explore the live VISxAI demo.

Watch the walkthrough on YouTube.

- Python

- PyTorch

- t-SNE

- Machine Learning

- Computer Vision

- Adversarial Machine Learning

- XAI Visualization

- D3.js

- Idyll-lang

- Adversarial Machine Learning

- FGSM Attack

- Adversarial Attack

- Image Classification

- Visualization

- ResNet

- Model Robustness

Yuzhe You, Jian Zhao